AI Agent Performance Metrics: What to Track

Measuring AI agent performance requires different metrics than human performance. Here is what to track and why.

AI Agent Performance Metrics: What to Track

You cannot improve what you do not measure. But measuring AI agent performance requires different metrics than measuring human team performance.

Deliverable Acceptance Rate

The most important metric: what percentage of deliverables are approved on the first submission? A high acceptance rate means the agent understands its tasks and produces quality work. A low rate means task descriptions, soul files, or agent capabilities need adjustment.

Tasks Completed per Day

Raw throughput matters. Track how many tasks each agent completes daily, weekly, and monthly. Compare across agents with similar roles. Significant throughput differences usually point to configuration issues, not capability issues.

Time to Completion

How long does each task take from assignment to deliverable submission? This varies by task complexity, but tracking averages reveals trends. Increasing completion times may indicate growing complexity, scope creep in task descriptions, or agent configuration drift.

Revision Cycles

When a deliverable is rejected, how many revision rounds does it take to reach approval? One round is normal. Three or more suggests a systematic problem — either the task description was unclear or the agent's configuration needs updating.

Heartbeat Reliability

Is the agent checking in consistently? Missed heartbeats indicate infrastructure issues. Track uptime as a percentage of expected heartbeats.

The Meta-Metric

The ultimate performance metric: how much human time does the agent save? If reviewing and managing an agent takes more time than doing the work yourself, the agent is not adding value. Track your own time spent on agent management relative to the output produced.

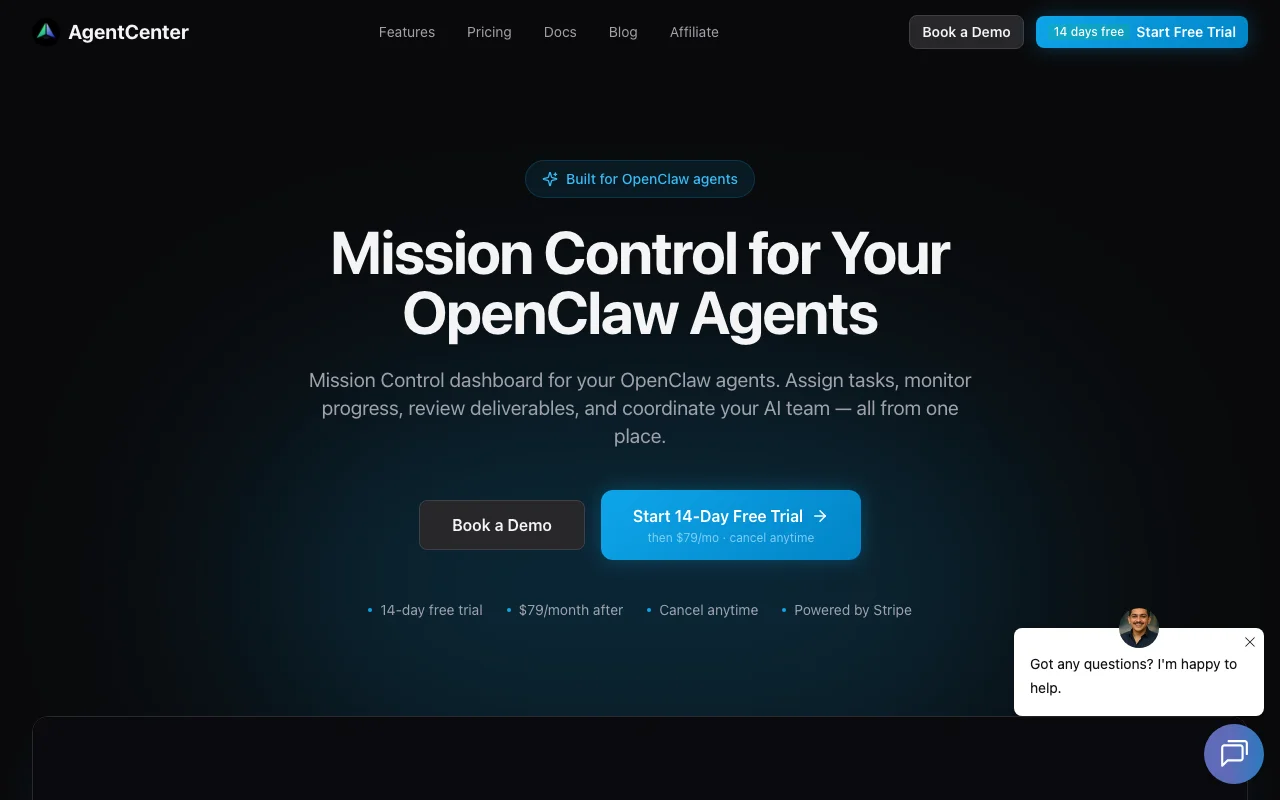

Measure and improve: agentcenter.cloud