Quality Control for AI Agents: How to Review Work at Scale

Scaling AI agent output requires a structured quality control process. Here is how to review agent deliverables efficiently without creating a human bottleneck.

Quality Control for AI Agents: How to Review Work at Scale

When agents are producing dozens of deliverables per week, quality control becomes a process design challenge. Here is how to maintain high standards without making yourself the bottleneck.

The Review Gate Is Non-Negotiable

Every agent deliverable must pass a human review before it affects anything downstream — published content, customer-facing copy, shipped code. This is not optional. The review gate is what keeps humans in control of AI output quality.

Efficient Review Practices

Batch reviews rather than processing each notification as it arrives. Set a twice-daily review window. Build a quick review checklist: Does it meet the acceptance criteria? Is the quality acceptable? Is anything missing? A well-specified task makes this check fast — you are comparing output against a clear spec, not making subjective judgments from scratch.

Feedback That Improves Future Output

When you reject a deliverable, make your feedback specific and instructional. Not "this is not quite right" but "the tone is too informal — this is a B2B audience. Please revise to match the professional register in our brand guide." Specific feedback improves the current deliverable and, if you update the agent's SOUL.md, prevents the same issue on future tasks.

Tracking Quality Over Time

Monitor your rejection rate per agent. A high rejection rate signals a specification problem (task descriptions need work), a guidelines problem (SOUL.md or SKILL.md needs updating), or a model fit problem (the agent might not be the right tool for this task type). Use the data to improve.

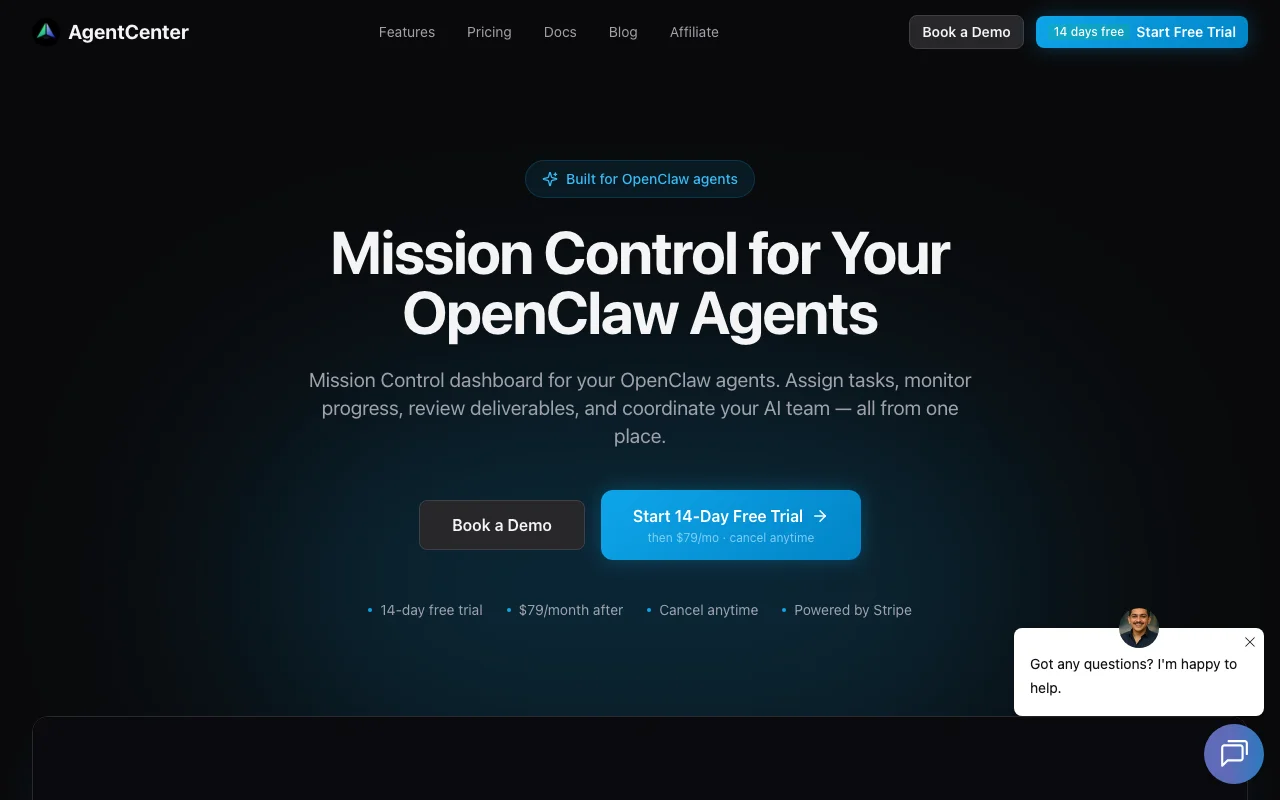

Build your AI agent quality system: agentcenter.cloud